|

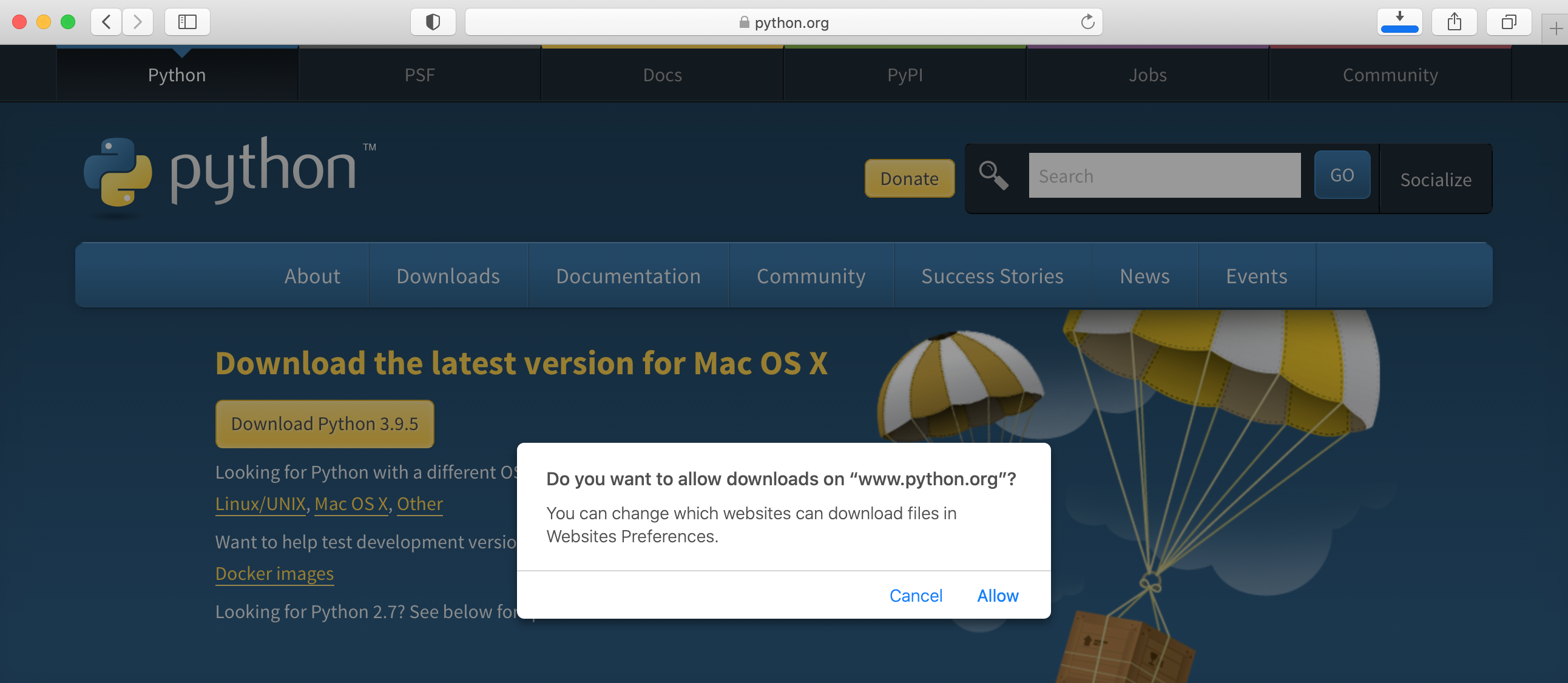

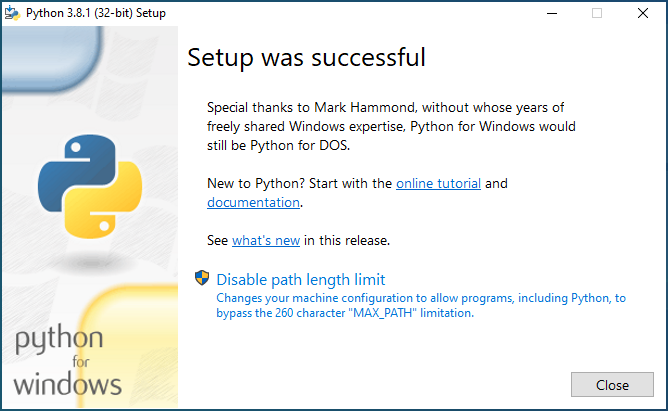

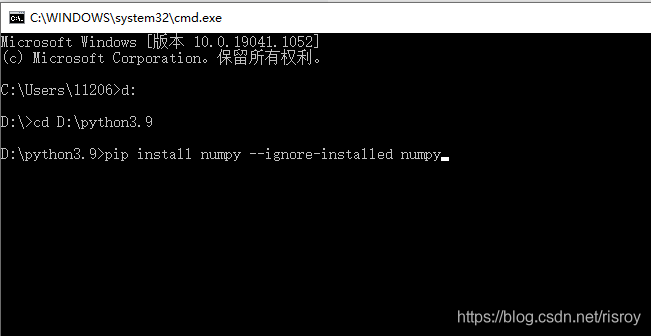

Many Unix-compatible operating systems, such as Mac OS X and some Linux distributions, have Python installed by default it. Apparently some of HP/Compaq’s administrative tools are written in Python. At this writing we’re aware of computers from Hewlett-Packard and Compaq that include Python. Some Windows machines also have Python installed.Download the tarfile for MACS, extract it (by double-clicking on Mac).I will also stay with python 2 for now, but see below for some info on python 3 (see also here for more). Show ()You will need to download and install python and numpy. Sql ( '''select 'spark' as hello ''' ) df. GetOrCreate () df = spark. Init () import pyspark # only run after findspark.init() from pyspark.sql import SparkSession spark = SparkSession.Developers work with a variety of technologies including. Next, we want a virtualenv. Homebrew installs a new version of python (by default the latest 2.x version available) and set is as default.This means that it comes with your Python installation, but you still must import it. The os module is a part of the standard library, or stdlib, within Python 3. Once the work environment is activated we can install NumPy, SciPy or Matplotlib to this environment: 1 conda install numpy scipy matplotlib At this point, your prompt should indicate that you are using the work environment.

I Have Two Versions Of Puthon On My , Scipy For Python3 Upgrade The PythonThis post shows how to derive new column in a Spark data frame from a JSON array string column. If you’re interested in what the ‘Introduction to Big Data and PySpark’ module could do for your team or department, please. In Python 3 you will need to encode the Unicode string into byte string with a method like "Hello World!".encode ('UTF-8') when you pass it into the socket function/method or you will get an error telling you that it only takes byte strings. To upgrade the Python version that PySpark uses, point the PYSPARK_PYTHON environment variable for the spark-env classification to the directory where Python 3.4 or 3.6 is installed. For Amazon EMR version 5.30.0 and later, Python 3 is the system default.

We don't have any change log information yet for version 3.9.1 of Python. Getting started with PySpark took me a few hours — when it shouldn’t have — as I had to read a lot of blogs/documentation to debug some of the setup issues. PySpark is a Python API to using Spark, which is a parallel and distributed engine for running big data applications. 系统:CentOS7 64位(Python version 2.7.5)目的:安装pyspark使其启动的默认python版本为python3python3.7.3(1)首先安装依赖包gcc(管理员或其权限下运行)yum -y install gcc (2)安装其他依赖包(可以不安装,但是可能会安装过程中报错):yum -y install zlib-devel bzip2-dev. Spark Context is the heart of any spark application. PySpark Shell links the Python API to spark core and initializes the Spark Context.

Python: 3.7、2.7 PySpark: 1.6.2 - 预编译包 OS: Mac OSX 10.11.1 看完上述内容,是不是对将PySpark导入Python的方法有进一步的了解,如果还想学习更多内容,欢迎关注亿速云行业资讯频道。 The first element in the row is the current state, and the rest of the elements are each a row indicating what the type of the input can be, the condition that must be satisfied in order for this state change to be the correct one, the action that happens during transition. With these classes in place, we can set up a 3-dimensional table where each row completely describes a state. Wii emulator for macPython Math: Compute Euclidean distance, Python Math: Exercise-79 with Solution. Starting Python 3.8, the math module directly provides the dist function, which returns the euclidean distance between two points (given as tuples or lists of coordinates): from math import dist dist((1, 2, 6), (-2, 3, 2)) # 5.0990195135927845.

0 Comments

Leave a Reply. |

AuthorWanda ArchivesCategories |

RSS Feed

RSS Feed